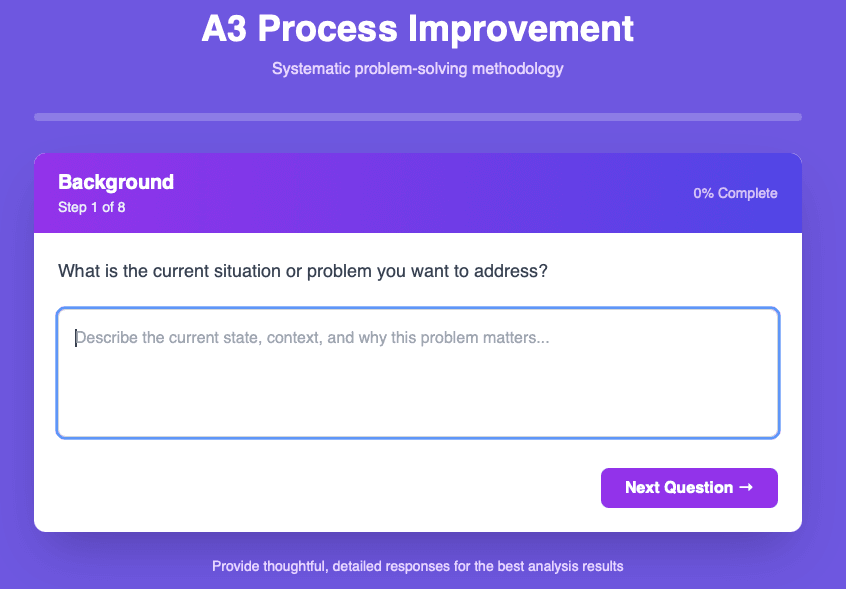

Predicting housing prices

XGBoost Regressor predictions

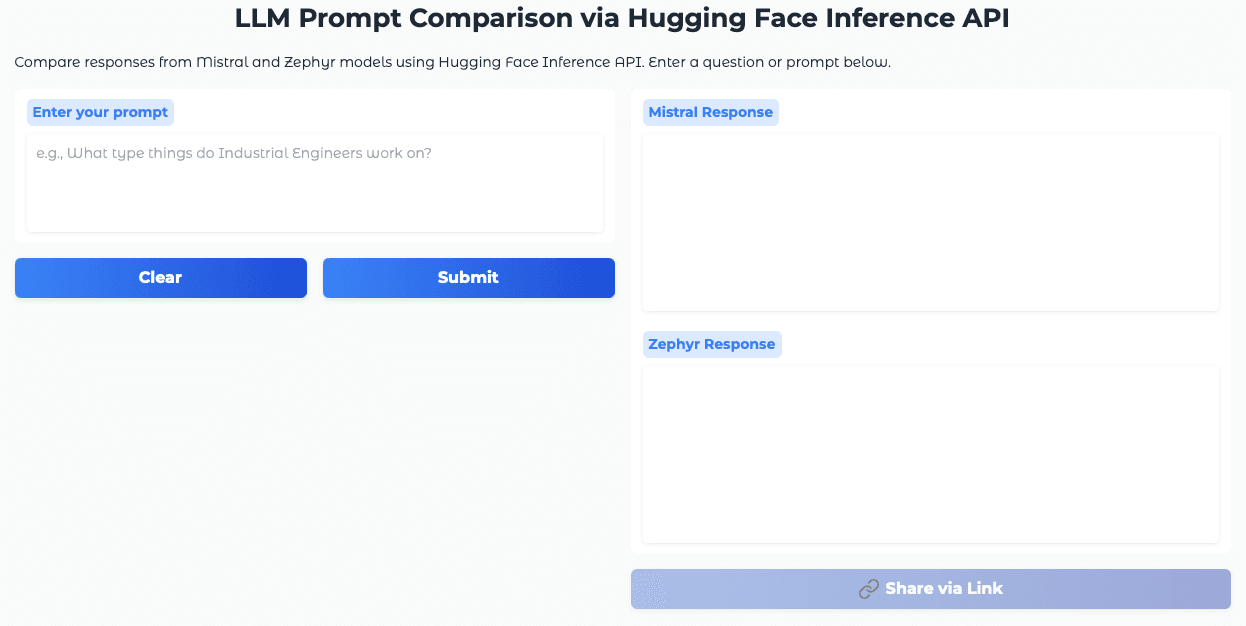

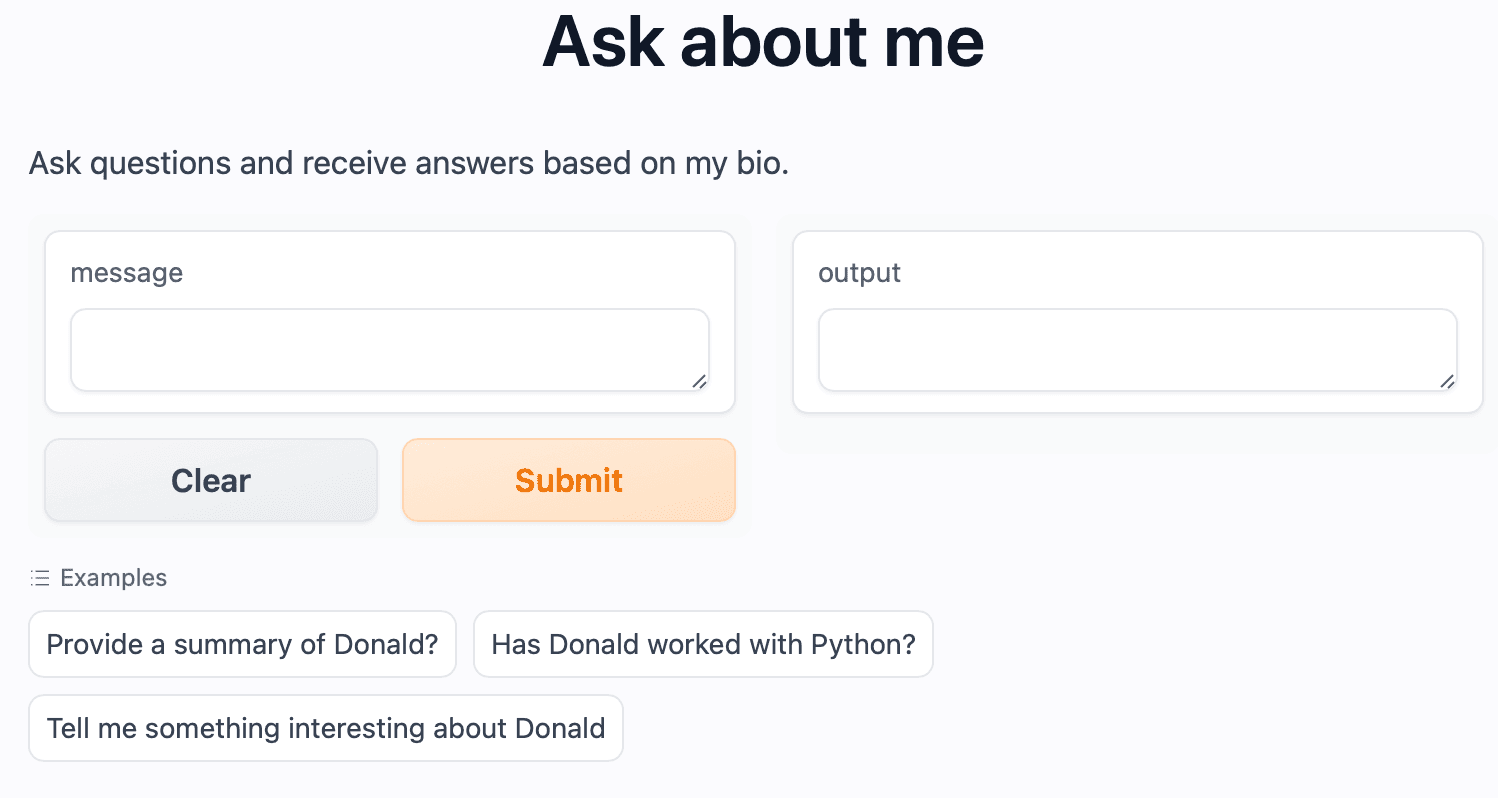

I am an Industrial Engineer utilizing the power of python to gain deeper insights in data.

I am currently learning Deep learning with TensorFlow

Using the Kaggle housing dataset, I practiced using machine learning to predict housing prices.

🔗 Links

Kaggle notebook

Tableau dashboard

Here is an outline of the steps I took to perform the analysis:

Data Exploration: Here are some of the helpful graphs created while exploring the data.

Histogram of Home Sales Prices

sns.displot(df_data['SalePrice'], bins=50, aspect=2, kde=True, color='darkblue') plt.title(f'Home Sales Price. Average: ${(df_data.SalePrice.mean()):,.0f}') plt.xlabel('Price ($)') plt.ylabel('# of Homes') plt.show()

Correlation heat map - This helps spot correlation between features and the target variable

SalesPrice

Average

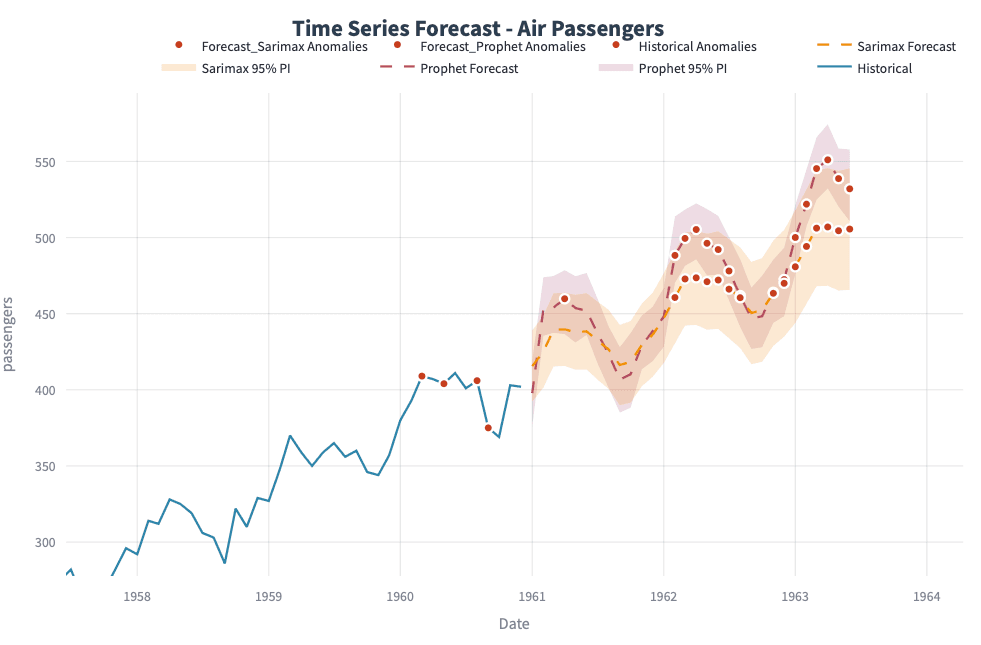

Sales Pricesover time ⬆️

📊 I also created a tableau dashboard to help me visualize the data. This wasn't necessary, but in the past I have used this as a way to spot correlations or relationships.

Data Cleaning

Created variables for

X(predictor) variables andy(target) variable.Train, test, split

Handle missing data and transform columns

# Handle Missing Data numeric_cols = X.select_dtypes(include=['number']).columns categorical_cols = X.select_dtypes(exclude=['number']).columns numeric_imputer = SimpleImputer(strategy='mean') categorical_imputer = SimpleImputer(strategy='most_frequent') # Create transformers for preprocessing numeric_transformer = Pipeline(steps=[ ('imputer', numeric_imputer), ('scaler', StandardScaler()) ]) categorical_transformer = Pipeline(steps=[ ('imputer', categorical_imputer), ('encoder', OneHotEncoder(handle_unknown='ignore')) ]) # Use ColumnTransformer to apply transformers to the appropriate columns from sklearn.compose import ColumnTransformer preprocessor = ColumnTransformer( transformers=[ ('num', numeric_transformer, numeric_cols), ('cat', categorical_transformer, categorical_cols) ])

XGB model and Predictions

# Create a XGBoost Regressor xgb = XGBRegressor(n_estimators=500, learning_rate=0.04)# Bundle preprocessing and modeling code in a pipeline my_pipeline = Pipeline(steps=[('preprocessor', preprocessor), ('model', xgb) ]) # Preprocessing of training data, fit model my_pipeline.fit(X_train, y_train) # Preprocessing of validation data, get predictions preds = my_pipeline.predict(X_valid) # Evaluate the model mae = mean_absolute_error(y_valid, preds) mse = mean_squared_error(y_valid, preds) r2 = r2_score(y_valid, preds) print(f"Mean Absolute Error (MAE): {mae:.2f}") print(f"Mean Squared Error (MSE): {mse:.2f}") print(f"R-squared (R2): {r2:.2f}")Hyper-parameter tuning

- Setting up parameters to tune

param_tuning = {

'model__learning_rate': [0.01, 0.1, 0.05],

'model__max_depth': [3, 5, 7, 10],

'model__min_child_weight': [1, 3, 5],

'model__subsample': [0.5, 0.7],

'model__colsample_bytree': [0.5, 0.7],

'model__n_estimators': [100, 200, 500, 1000],

'model__objective': ['reg:squarederror']

}

- Grid Search

xgb_model = XGBRegressor()

my_pipeline = Pipeline(steps=[('preprocessor', preprocessor),

('model', xgb_model)

])

xgb_cv = GridSearchCV(estimator=my_pipeline,

param_grid = param_tuning,

scoring = 'neg_mean_absolute_error', #MAE

#scoring = 'neg_mean_squared_error', #MSE

cv = 5)

xgb_cv.fit(X_train, y_train)

print("Best Score: ", xgb_cv.best_score_)

print("Best Params: ", xgb_cv.best_params_)

Hyper-parameter tuning helped decrease the model error 🎉